Lyric Transcription in Noisy Environments

explores how to make lyric transcription actually work in noisy, real-world audio — café recordings, concert clips, and phone-captured music. (GCT 634 Musical Applications of Machine Learning Project)

To read the full report, see: (Report PDF: Click here)

To browse the implementation, visit the project repository: Click here.

Project Overview — Lyric Transcription in Noisy Environments

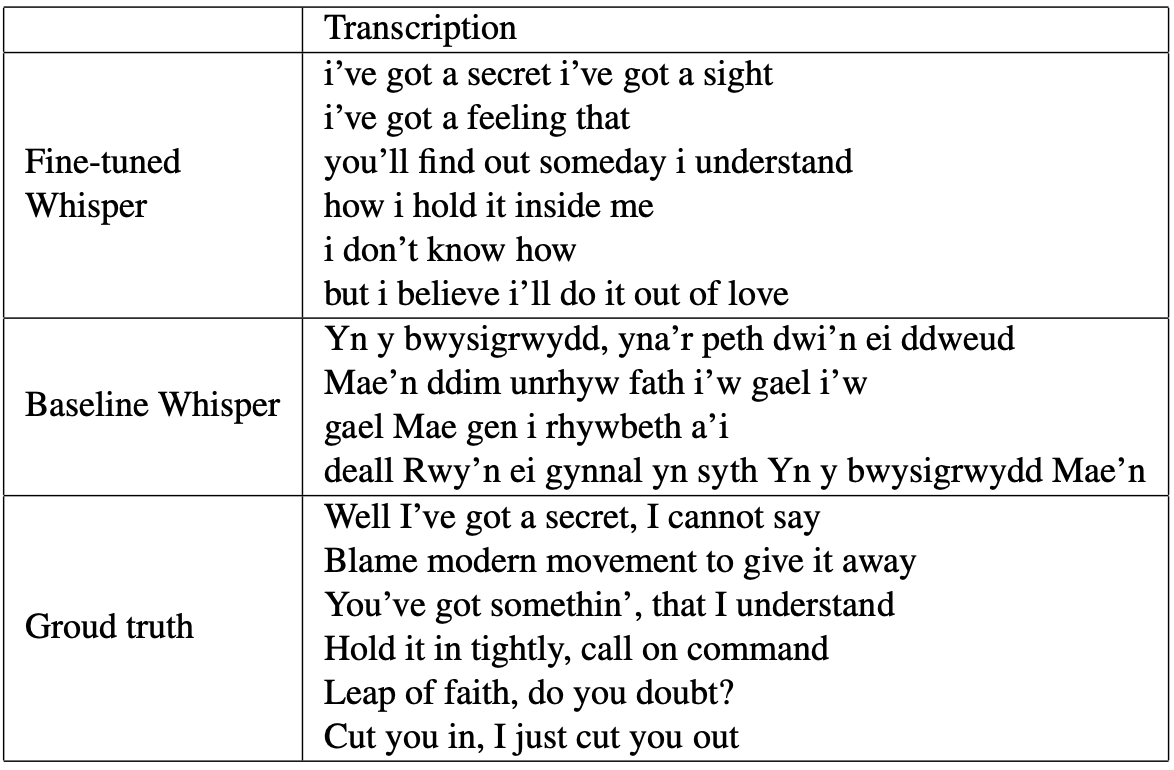

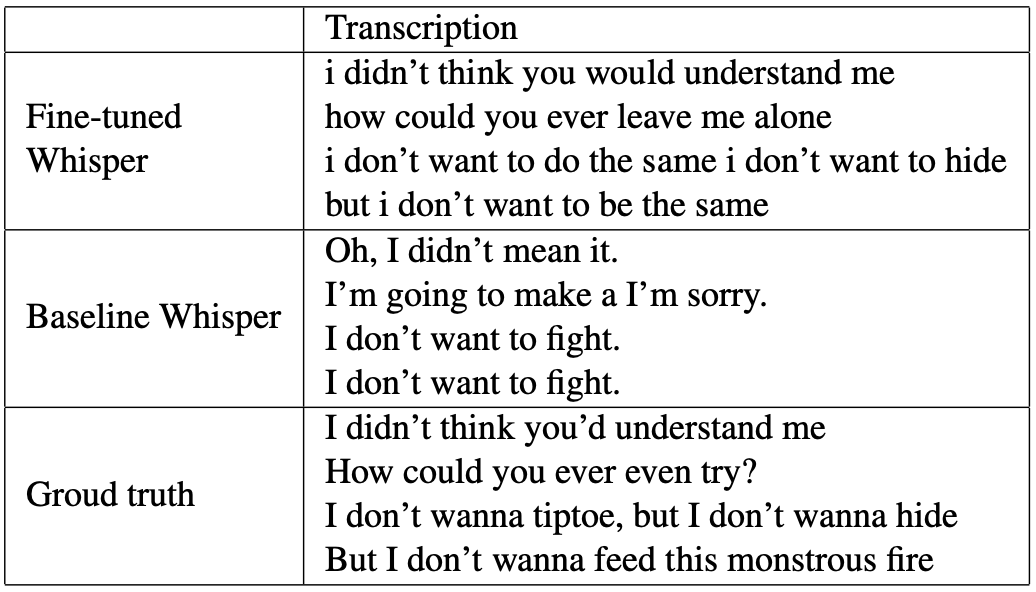

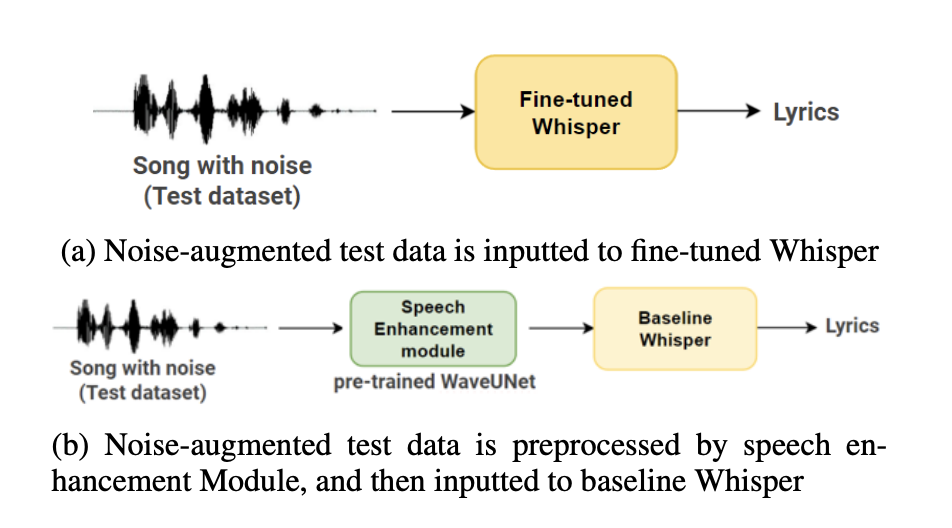

This project explores how to make lyric transcription actually work in noisy, real-world audio — café recordings, concert clips, and phone-captured music. Whisper-medium performs well on clean speech, but it falls apart once background noise enters the signal. To handle this, I fine-tuned Whisper-medium on the JamendoLyrics dataset augmented with MUSAN noise across a range of SNR levels.

This simple shift had a major effect: the fine-tuned model achieved a WER of 18.05%, while the baseline Whisper model with a Speech Enhancement front-end reached only 63.45%. Fine-tuning remained stable at SNR values above −2 dB, became unstable at −3 dB, and overfit below −3 dB. Using this insight, I selected the most stable model and tested it on my own recordings — café audio and low-quality concert samples — where it consistently outperformed the baseline.

I also analyzed how Whisper’s internal layers behave under clean and noisy audio. After fine-tuning, the final convolutional and transformer layers showed reduced activation differences between clean and noisy inputs, meaning the model learns more noise-robust representations. This aligns with the improved transcription accuracy.

Overall, this project shows that noise-augmented fine-tuning is more reliable than using external Speech Enhancement for lyric transcription. It also opens space for future work: dynamic SNR scheduling, larger Whisper variants, and multi-stage pipelines that combine source separation with noise-robust training.